1 Research practices and assessment of research misconduct

Research practices directly affect the epistemological pursuit of science: Responsible conduct of research affirms it; research misconduct undermines it. Typically, a responsible scientist is conceptualized as objective, meticulous, skeptical, rational, and not subject to external incentives such as prestige or social pressure. Research misconduct, on the other hand, is formally defined (e.g., in regulatory documents) as three types of condemned, intentional behaviors: fabrication, falsification, and plagiarism (Office of Science and Technology Policy 2000). Research practices that are neither conceptualized as responsible nor defined as research misconduct could be considered questionable research practices, which are practices that are detrimental to the research process (National Academy of Sciences and Medicine 1992; Steneck 2006). For example, the misapplication of statistical methods can increase the number of false results and is therefore not responsible. At the same time, such misapplication can also not be deemed research misconduct because it falls outside the defined scope of FFP. Such undefined and potentially questionable research practices have been widely discussed in the field of psychology in recent years (John, Loewenstein, and Prelec 2012; Nosek and Bar-Anan 2012; Nosek, Spies, and Motyl 2012; Open Science Collaboration 2015; Simmons, Nelson, and Simonsohn 2011).

This chapter discusses the responsible conduct of research, questionable research practices, and research misconduct. For each of these three, we extend on what it means, what researchers currently do, and how it can be facilitated (i.e., responsible conduct) or prevented (i.e., questionable practices and research misconduct). These research practices encompass the entire research practice spectrum proposed by Steneck (2006), where responsible conduct of research is the ideal behavior at one end, FFP the worst behavior on the other end, with (potentially) questionable practices in between.

1.1 Responsible conduct of research

1.1.1 What is it?

Responsible conduct of research is often defined in terms of a set of abstract, normative principles. One such set of norms of good science (Anderson et al. 2010; Merton 1942) is accompanied by a set of counternorms (Anderson et al. 2010; Mitroff 1974) that promulgate irresponsible research. These six norms and counternorms can serve as a valuable framework to reflect on the behavior of a researcher and are included in Table 1.1.

| Norm | Description norm | Counternorm |

|---|---|---|

| Universalism | Evaluate results based on pre-established and non-personal criteria | Particularism |

| Communality | Freely and widely share findings | Secrecy |

| Disinterestedness | Results not corrupted by personal gains | Self-interestedness |

| Skepticism | Scrutinize all findings, including own | Dogmatism |

| Governance | Decision-making in science is done by researchers | Administration |

| Quality | Evaluate researchers based on the quality of their work | Quantity |

Besides abiding by these norms, responsible conduct of research consists of both research integrity and research ethics (Shamoo and Resnik 2009). Research integrity is the adherence to professional standards and rules that are well defined and uniform, such as the standards outlined by the American Psychological Association (2010a). Research ethics, on the other hand, is “the critical study of the moral problems associated with or that arise in the course of pursuing research” (Steneck 2006), which is abstract and pluralistic. As such, research ethics is more fluid than research integrity and is supposed to fill in the gaps left by research integrity (Koppelman-White 2006). For example, not fabricating data is the professional standard in research, but research ethics informs us on why it is wrong to fabricate data. This highlights that ethics and integrity are not the same, but rather two related constructs. Discussion or education should therefore not only reiterate the professional standards, but also include training on developing ethical and moral principles that can guide researchers in their decision-making.

1.1.2 What do researchers do?

Even though most researchers subscribe to the aforementioned normative principles, fewer researchers actually adhere to them in practice and many researchers perceive their scientific peers to adhere to them even less. A survey of 3,247 researchers by Anderson, Martinson, and De Vries (2007) indicated that researchers subscribed to the norms more than they actually behaved in accordance to these norms. For instance, a researcher may be committed to sharing his or her data (the norm of communality), but might shy away from actually sharing data at an early stage out of a fear that of being scooped by other researchers. This result aligns with surveys showing that many researchers express a willingness to share data, but often fail to do so when asked (Krawczyk and Reuben 2012; Savage and Vickers 2009). Moreover, although researchers admit they do not adhere to the norms as much as they subscribe to them, they still regard themselves as adhering to the norms more so than their peers. For counternorms, this pattern reversed. These results indicate that researchers systematically evaluate their own conduct as more responsible than other researchers’ conduct.

This gap between subscription and actual adherence to the normative principles is called normative dissonance and could potentially be due to substandard academic education or lack of open discussion on ethical issues. Anderson et al. (2007) suggested that different types of mentoring affect the normative behavior by a researcher. Most importantly, ethics mentoring (e.g., discussing whether a mistake that does not affect conclusions should result in a corrigendum) might promote adherence to the norms, whereas survival mentoring (e.g., advising not to submit a non-crucial corrigendum because it could be bad for your scientific reputation) might promote adherence to the counternorms. Ethics mentoring focuses on discussing ethical issues (Anderson et al. 2007) that might facilitate higher adherence to norms due to increased self-reflection, whereas survival mentoring focuses on how to thrive in academia and focuses on building relationships and specific skills to increase the odds of being successful.

1.1.3 Improving responsible conduct

Increasing exposure to ethics education throughout the research career might improve responsible research conduct. Research indicated that weekly 15-minute ethics discussions facilitated confidence in recognizing ethical problems in a way that participants deemed both effective and enjoyable (Peiffer, Hugenschmidt, and Laurienti 2011). Such forms of active education are fruitful because they teach researchers practical skills that can change their research conduct and improves prospective decision making, where a researcher rapidly assesses the potential outcomes and ethical implications of the decision at hand, instead of in hindsight (Whitebeck 2001). It is not to be expected that passive education on guidelines should be efficacious in producing behavioral change (Kornfeld 2012), considering that participants rarely learn about useful skills or experience a change in attitudes as a consequence of such passive education (Plemmons, Brody, and Kalichman 2006).

Moreover, in order to accommodate the normative principles of scientific research, the professional standards, and a researcher’s moral principles, transparent research practices can serve as a framework for responsible conduct of research. Transparency in research embodies the normative principles of scientific research: universalism is promoted by improved documentation; communalism is promoted by publicly sharing research; disinterestedness is promoted by increasing accountability and exposure of potential conflicts of interest; skepticism is promoted by allowing for verification of results; governance is promoted by improved project management by researchers; higher quality is promoted by the other norms. Professional standards also require transparency. For instance, the APA and publication contracts require researchers to share their data with other researchers (American Psychological Association 2010a). Even though authors often make their data available upon request, such requests frequently fail (Krawczyk and Reuben 2012; Wicherts et al. 2006), which results in a failure to adhere to professional standards. Openness regarding the choices made (e.g., on how to analyze the data) during the research process will promote active discussion of prospective ethics, increasing self-reflective capacities of both the individual researcher and the collective evaluation of the research (e.g., peer-reviewers).

In the remainder of this section we outline a type of project management, founded on transparency, which seems apt to be the new standard within psychology (Nosek and Bar-Anan 2012; Nosek, Spies, and Motyl 2012). Transparency guidelines for journals have also been proposed (Nosek et al. 2015) and the outlined project management adheres to these guidelines from an author’s perspective. The provided format focuses on empirical research and is certainly not the only way to apply transparency to adhere to responsible conduct of research principles.

1.1.3.1 Transparent project management

Research files can be easily managed by creating an online project at the Open Science Framework (OSF; osf.io). The OSF is free to use and provides extensive project management facilities to encourage transparent research. Project management via this tool has been tried and tested in, for example, the Many Labs project (R. A. Klein et al. 2014) and the Reproducibility project (Open Science Collaboration 2015). Research files can be manually uploaded by the researcher or automatically synchronized (e.g., via Dropbox or Github). Using the OSF is easy and explained in-depth at osf.io/getting-started.

The OSF provides the tools to manage a research project, but how to apply these tools still remains a question. Such online management of materials, information, and data, is preferred above a more informal system lacking in transparency that often strongly rests on particular contributor’s implicit knowledge.

As a way to organize a version-controlled project, we suggest a ‘prune-and-add’ template, where the major elements of most research projects are included but which can be specified and extended for specific projects. This template includes folders as specified in Table 1.2, which covers many of the research stages. The template can be readily duplicated and adjusted on the OSF for practical use in similar projects (like replication studies; osf.io/4sdn3).

| Folder | Summary of contents |

|---|---|

| analyses | Analyses scripts (e.g., as reported in the paper, exploratory files) |

| archive | Outdated files or files not of direct value (e.g., unused code) |

| bibliography | Reference library or related articles (e.g., Endnote library, PDF files) |

| data | All data files used (e.g., raw data, processed data) |

| figures | Figures included in the manuscript and code for figures |

| functions | Custom functions used (e.g., SPSS macro, R scripts) |

| materials | Research materials specified per study (e.g., survey questions, stimuli) |

| preregister | Preregistered hypotheses, analysis plans, research designs |

| submission | Manuscript, submissions per journal, and review rounds |

| supplement | Files that supplement the research project (e.g., notes, codebooks) |

This suggested project structure also includes a folder to include preregistration files of hypotheses, analyses, and research design. The preregistration of these ensures that the researcher does not hypothesize after the results are known (Kerr 1998), but also ensures readers that the results presented as confirmatory were actually confirmatory (Chambers 2015; Wagenmakers et al. 2012). The preregistration of analyses also ensures that the statistical analysis chosen to test the hypothesis was not dependent on the result. Such preregistrations document the chronology of the research process and also ensure that researchers actively reflect on the decisions they make prior to running a study, such that the quality of the research might be improved.

Also available in this project template is a file to specify contributions to a research project. This is important for determining authorship, responsibility, and credit of the research project. With more collaborations occurring throughout science and increasing specialization, researchers cannot be expected to carry responsibility for the entirety of large multidisciplinary papers, but authorship does currently imply this. Consequently, authorship has become a too imprecise measure for specifying contributions to a research project and requires a more precise approach.

Besides structuring the project and documenting the contributions, responsible conduct encourages independent verification of the results to reduce particularism. A co-pilot model has been introduced previously (Veldkamp et al. 2014; Wicherts 2011), where at least two researchers independently run all analyses based on the raw data. Such verification of research results enables streamline reproduction of the results by outsiders (e.g., are all files readily available? are the files properly documented? do the analyses work on someone else’s computer?), helps find out potential errors (Bakker and Wicherts 2011; Nuijten, Hartgerink, et al. 2015), and increases confidence in the results. We therefore encourage researchers to incorporate such a co-pilot model into all empirical research projects.

1.2 Questionable research practices

1.2.1 What is it?

Questionable research practices are defined as practices that are detrimental to the research process (National Academy of Sciences and Medicine 1992). Examples include inadequate research documentation, failing to retain research data for a sufficient amount of time, and actively refusing access to published research materials. However, questionable research practices should not be confounded with questionable academic practices, such as academic power play, sexism, and scooping.

Attention for questionable practices in psychology has (re-)arisen in recent years, in light of the so-called “replication crisis” (Makel, Plucker, and Hegarty 2012). Pinpointing which factors initiated doubts about the reproducibility of findings is difficult, but most notable seems an increased awareness of widely accepted practices as statistically and methodologically questionable.

Besides affecting the reproducibility of psychological science, questionable research practices align with the aforementioned counternorms in science. For instance, confirming prior beliefs by selectively reporting results is a form of dogmatism; skepticism and communalism are violated by not providing peers with research materials or details of the analysis; universalism is hindered by lack of research documentation; governance is deteriorated when the public loses its trust in the research system because of signs of the effects of questionable research practices (e.g., repeated failures to replicate) and politicians initiate new forms of oversight.

Suppose a researcher fails to find the (a priori) hypothesized effect, subsequently decides to inspect the effect for each gender, and finds an effect only for females. Such an ad hoc exploration of the data is perfectly fine if it were presented as an exploration (Wigboldus and Dotsch 2015). However, if the subsequent publication only mentions the effect for females and presents it as confirmatory, instead of exploratory, this is questionable. The \(p\)-values should have been corrected for multiple testing (three hypotheses rather than one were tested) and the result is clearly not as convincing as one that would have been hypothesized a priori.

These biases occur in part because researchers, editors, and peer-reviewers are biased to believe that statistical significance has a bearing on the probability of a hypothesis being true. Such misinterpretation of the \(p\)-value is not uncommon (Cohen 1994). The perception that statistical significance bears on the probability of a hypothesis reflects an essentialist view of \(p\)-values rather than a stochastic one; the belief that if an effect exists, the data will mirror this with a small \(p\)-value (Sijtsma, Veldkamp, and Wicherts 2015). Such problematic beliefs enhance publication bias, because researchers are less likely to believe in their results and are less likely submit their work for publication (Franco, Malhotra, and Simonovits 2014). This enforces the counternorm of secrecy by keeping nonsignificant results in the file-drawer (Rosenthal 1979), which in turn greatly biases the picture emerging from the literature.

1.2.2 What do researchers do?

Most questionable research practices are hard to retrospectively detect, but one questionable research practice, the misreporting of statistical significance, can be readily estimated and could provide some indication of how widespread questionable practices might be. Errors that result in the incorrect conclusion that a result is significant are often called gross errors, which indicates that the decision error had substantive effects. Large scale research in psychology has indicated that 12.5-20% of sampled articles include at least one such gross error, with approximately 1% of all reported test results being affected by such gross errors (Bakker and Wicherts 2011; Nuijten, Hartgerink, et al. 2015; Veldkamp et al. 2014).

Nonetheless, the prevalence of questionable research practices remains largely unknown and reproducibility of findings has been shown to be problematic. In one large-scale project, only 36% of findings published in three main psychology journals in a given year could be replicated (Open Science Collaboration 2015). Effect sizes were smaller in the replication than in the original study in 80% of the studies, and it is quite possible that this low replication rate and decrease in effect sizes are mostly due to publication bias and the use of questionable research practices in the original studies.

1.2.3 How can it be prevented?

Counternorms such as self-interestedness, dogmatism, and particularism are discouraged by transparent practices because practices that arise from them will become more apparent to scientific peers.

Therefore transparency guidelines have been proposed and signed by editors of over 500 journals (Nosek et al. 2015). To different degrees, signatories of these guidelines actively encourage, enforce, and reward data sharing, material sharing, preregistration of hypotheses or analyses, and independent verification of results. The effects of these guidelines are not yet known, considering their recent introduction. Nonetheless, they provide a strong indication that the awareness of problems is trickling down into systemic changes that prevent questionable practices.

Most effective might be preregistrations of research design, hypotheses, and analyses, which reduce particularism of results by providing an a priori research scheme. It also outs behaviors such as the aforementioned optional stopping, where extra participants are sampled until statistical significance is reached (Armitage, McPherson, and Rowe 1969) or the dropping of conditions or outcome variables (Franco, Malhotra, and Simonovits 2016). Knowing that researchers outlined their research process and seeing it adhered to helps ensure readers that results are confirmatory – rather than exploratory of nature, when results are presented as confirmatory (Wagenmakers et al. 2012), ensuring researchers that questionable practices did not culminate in those results.

Moreover, use of transparent practices even allows for unpublished research to become discoverable, effectively eliminating publication bias. Eliminating publication bias would make the research system an estimated 30 times more efficient (Van Assen et al. 2014). Considering that unpublished research is not indexed in the familiar peer-reviewed databases, infrastructures to search through repositories similar to the OSF are needed. One such infrastructure is being built by the Center for Open Science (SHARE; osf.io/share), which searches through repositories similar to the OSF (e.g., figshare, Dryad, arXiv).

1.3 Research misconduct

1.3.1 What is it?

As mentioned at the beginning of the article, research misconduct has been defined as fabrication, falsification, and plagiarism (FFP). However, it does not include “honest error or differences of opinion” (Office of Science and Technology Policy 2000; Resnik and Stewart 2012). Fabrication is the making up of datasets entirely. Falsification is the adjustment of a set of data points to ensure the wanted results. Plagiarism is the direct reproduction of other’s creative work without properly attributing it. These behaviors are condemned by many institutions and organizations, including the American Psychological Association (2010a).

Research misconduct is clearly the worst type of research practice, but despite it being clearly wrong, it can be approached from a scientific and legal perspective (Wicherts and Van Assen 2012). The scientific perspective condemns research misconduct because it undermines the pursuit for knowledge. Fabricated or falsified data are scientifically useless because they do not add any knowledge that can be trusted. Use of fabricated or falsified data is detrimental to the research process and to knowledge building. It leads other researchers or practitioners astray, potentially leading to waste of research resources when pursuing false insights or unwarranted use of such false insights in professional or educational practice.

The legal perspective sees research misconduct as a form of white-collar crime, although in practice it is typically not subject to criminal law but rather to administrative or labor law. The legal perspective requires intention to commit research misconduct, whereas the scientific perspective requires data to be collected as described in a research report, regardless of intent. In other words, the legal perspective seeks to answer the question “was misconduct committed with intent and by whom?”

The scientific perspective seeks to answer the question “were results invalidated because of the misconduct?” For instance, a paper reporting data that could not have been collected with the materials used in the study (e.g., the reported means lie outside the possible values on the psychometric scale) is invalid scientifically. The impossible results could be due to research misconduct but also due to honest error.

Hence, a legal verdict of research misconduct requires proof that a certain researcher falsified or fabricated the data. The scientific assessment of the problems is often more straightforward than the legal assessment of research misconduct. The former can be done by peer reviewers, whereas the latter involves regulations and a well-defined procedure allowing the accused to respond to the accusations.

Throughout this part of the article, we focus on data fabrication and falsification, which we will illustrate with examples from the Diederik Stapel case — a case we are deeply familiar with. His fraudulent activities resulted in 58 retractions (as of May, 2016), making this the largest known research misconduct case in the social sciences.

1.3.2 What do researchers do?

Given that research misconduct represents such a clear violation of the normative structure of science, it is difficult to study how many researchers commit research misconduct and why they do it. Estimates based on self-report surveys suggest that around 2% of researchers admit to having fabricated or falsified data during their career (Fanelli 2009). Although the number of retractions due to misconduct has risen in the last decades, both across the sciences in general (Fang, Steen, and Casadevall 2012) and in psychology in particular (Margraf 2015), this number still represents a fairly low number in comparison to the total number of articles in the literature (Wicherts, Hartgerink, and Grasman 2016). Similarly, the number of researchers found guilty of research misconduct is relatively low, suggesting that many cases of misconduct go undetected; the actual rate of research misconduct is unknown. Little research has addressed why researchers fabricate or falsify data, but it is commonly accepted that they do so out of self-interest in order to obtain publications and further their career. What we know from some exposed cases, however, is that fabricated or falsified data are often quite extraordinary and so could sometimes be exposed as not being genuine.

Humans, including researchers, are quite bad in recognizing and fabricating probabilistic processes (Mosimann et al. 2002; Mosimann, Wiseman, and Edelman 1995). For instance, humans frequently think that, after five coin flips that result in heads, the probability of the next coin flip is more likely to be tails than heads; the gambler’s fallacy (Tversky and Kahneman 1974). Inferential testing is based on sampling; by extension variables should be of probabilistic origin and have certain stochastic properties. Because humans have problems adhering to these probabilistic principles, fabricated data is likely to lead to data that does not properly adhere to the probabilistic origins at some level of the data (Haldane 1948).

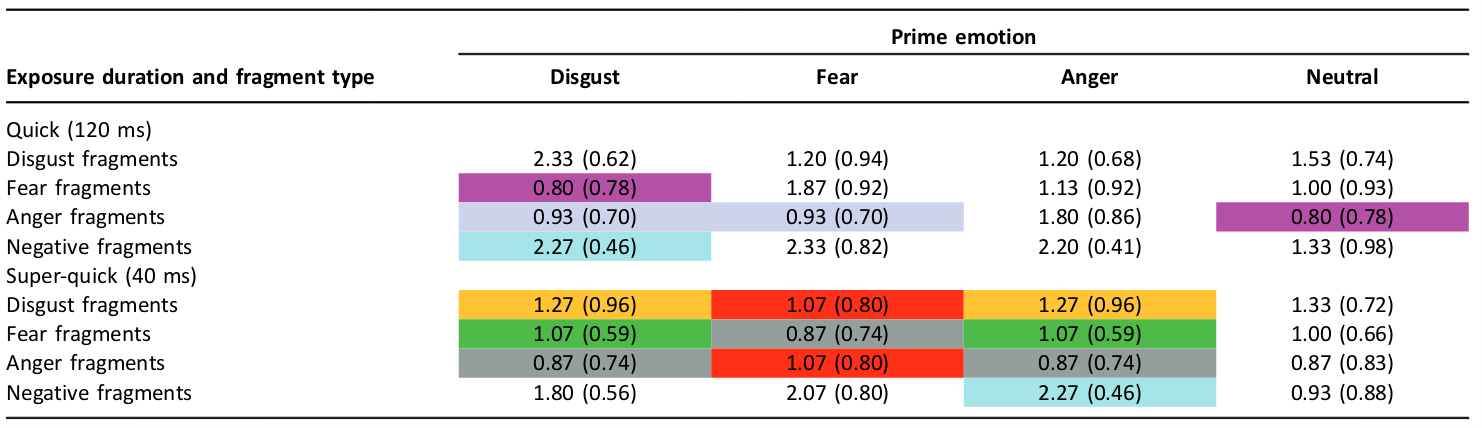

Exemplary of this lack of fabricating probabilistic processes is a table in a now retracted paper from the Stapel case (“Retraction of ‘the Secret Life of Emotions’ and ‘Emotion Elicitor or Emotion Messenger? Subliminal Priming Reveals Two Faces of Facial Expressions’” 2012; Ruys and Stapel 2008). In the original Table 1, reproduced here as Figure 1.1, 32 means and standard deviations are presented. Fifteen of these cells are duplicates of another cell (e.g., “0.87 (0.74)” occurs three times). Finding exact duplicates is extremely rare for even one case, if the variables are a result of probabilistic processes as in sampling theory.

Figure 1.1: Reproduction of Table 1 from the retracted Ruys and Stapel (2008) paper. The table shows 32 cells with ‘M (SD)’, of which 15 are direct duplicates of one of the other cells. The original version with highlighted duplicates can be found at https://osf.io/89mcn.

Why reviewers and editors did not detect this remains a mystery, but it seems that they simply do not pay attention to potential indicators of misconduct in the publication process (Bornmann, Nast, and Daniel 2008). Similar issues with blatantly problematic results in papers that were later found to be due to misconduct have been noted in the medical sciences (Stewart and Feder 1987). Science has been regarded as a self-correcting system based on trust. This aligns with the idea that misconduct occurs because of “bad apples” (i.e., individual factors) and not because of a “bad barrel” (i.e., systemic factors), increasing trust in the scientific enterprise. However, the self-correcting system has been called a myth (Stroebe, Postmes, and Spears 2012) and an assumption that instigates complacency (Hettinger 2010); if reviewers and editors have no criteria that pertain to fabrication and falsification (Bornmann, Nast, and Daniel 2008), this implies that the current publication process is not always functioning properly as a self-correcting mechanism. Moreover, trust in research as a self-correcting system can be accompanied with complacency by colleagues in the research process.

The most frequent way data fabrication is detected is by those researchers who are scrutinous, which ultimately results in whistleblowing. For example, Stapel’s misdeeds were detected by young researchers who were brave enough to blow the whistle. Although many regulations include clauses that help protect the whistleblowers, whistleblowing is known to represent a risk (Lubalin, Ardini, and Matheson 1995), not only because of potential backlash but also because the perpetrator is often closely associated with the whistleblower, potentially leading to negative career outcomes such as retracted articles on which one is co-author. This could explain why whistleblowers remain anonymous in only an estimated 8% of the cases (Price 1998). Negative actions as a result of loss of anonymity include not only potential loss of a position, but also social and mental health problems (Lubalin and Matheson 1999; Allen and Dowell 2013). It seems plausible to assume that therefore not all suspicions are reported.

How often data fabrication and falsification occur is an important question that can be answered in different ways; it can be approached as incidence or as prevalence. Incidence refers to new cases in a certain timeframe, whereas prevalence refers to all cases in the population at a certain time point. Misconduct cases are often widely publicized, which might create the image that more cases occur, but the number of cases seems relatively stable (Rhoades 2004). Prevalence of research misconduct is of great interest and, as aforementioned, a meta-analysis indicated that around 2% of surveyed researchers admit to fabricating or falsifying research at least once (Fanelli 2009).

The prevalence that is of greatest interest is that of how many research papers contain data that have been fabricated or falsified. Systematic data on this are unavailable, because papers are not evaluated to this end in an active manner (Bornmann, Nast, and Daniel 2008). Only one case study exists: the Journal of Cell Biology evaluates all research papers for cell image manipulation (Rossner and Yamada 2004; Bik, Casadevall, and Fang 2016a), a form of data fabrication/falsification. They have found that approximately 1% of all research papers that passed peer review (out of total of over 3000 submissions) were not published because of the detection of image manipulation (The Journal of Cell Biology 2015a).

1.3.3 How can it be prevented?

Notwithstanding discussion about reconciliation of researchers who have been found guilty of research misconduct (Cressey 2013), these researchers typically leave science after having been exposed. Hence, improving the chances of detecting misconduct may help not only in the correction of the scientific record, but also in the prevention of research misconduct. In this section we discuss how the detection of fabrication and falsification might be improved and what to do when misconduct is detected.

When research is suspect of data fabrication or falsification, whistleblowers can report these suspicions to institutions, professional associations, and journals. For example, institutions can launch investigations via their integrity offices. Typically, a complaint is submitted to the research integrity officer, who subsequently decides whether there are sufficient grounds for further investigation. In the United States, integrity officers have the possibility to sequester, that is to retrieve, all data of the person in question. If there is sufficient evidence, a formal misconduct investigation or even a federal misconduct investigation by the Office of Research Integrity might be started. Professional associations can also launch some sort of investigation, if the complaint is made to the association and the respondent is a member of that association. Journals are also confronted with complaints about specific research papers and those affiliated with the Committee on Publication Ethics have a protocol for dealing with these kinds of allegations (see publicationethics.org/resources for details). The best way to improve detection of data fabrication directly is to further investigate suspicions and report them to your research integrity office, albeit the potential negative consequences should be kept in mind when reporting the suspicions, such that it is best to report anonymously and via analog mail (digital files contain metadata with identifying information).

More indirectly, statistical tools can be applied to evaluate the veracity of research papers and raw data (Carlisle et al. 2015; Peeters, Klaassen, and Wiel 2015), which helps detect potential lapses of conduct. Statistical tools have been successfully applied in data fabrication cases, for instance the Stapel case (Levelt Committee, Drenth Committee, and Noort, Committee 2012), the Fujii case (Carlisle 2012), and in the cases of Smeesters and Sanna (Simonsohn 2013). Interested readers are referred to Buyse et al. (1999) for a review of statistical methods to detect potential data fabrication.

Besides using statistics to monitor for potential problems, authors and principal investigators are responsible for results in the paper and therefore should invest in verification of results, which improves earlier detection of problems even if these problems are the result of mere sloppiness or honest error. Even though it is not feasible for all authors to verify all results, ideally results should be verified by at least one co-author. As mentioned earlier, peer-review does not weed out all major problems (Bornmann, Nast, and Daniel 2008) and should not be trusted blindly.

Institutions could facilitate detection of data fabrication and falsification by implementing data auditing. Data auditing is the independent verification of research results published in a paper (Shamoo 2006). This goes hand-in-hand with co-authors verifying results, but this is done by a researcher not directly affiliated with the research project. Auditing data is common practice in research that is subject to governmental oversight, for instance drug trials that are audited by the Food and Drug Administration (Seife 2015).

Papers that report fabricated or falsified data are typically retracted. The decision to retract is often (albeit not necessarily) made after the completion of a formal inquiry and/or investigation of research misconduct by the academic institution, employer, funding organization and/or oversight body. Because much of the academic work is done for hire, the employer can request a retraction from the publisher of the journal in which the article appeared. Often, the publisher then consults with the editor (and sometimes also with proprietary organizations like the professional society that owns the journal title) to decide on whether to retract. Such processes can be legally complex if the researcher who was guilty of research misconduct opposes the retraction. The retraction notice ideally should provide readers with the main reasons for the retraction, although quite often the notices lack necessary information (Van Noorden 2011). The popular blog Retraction Watch normally reports on retractions and often provides additional information on the reasons for retraction that other parties involved in the process (co-authors, whistleblowers, the accused researcher, the (former) employer, and the publisher) are sometimes reluctant to provide (Marcus and Oransky 2014). In some cases, the editors of a journal may decide to publish an editorial expression of concern if there are sufficient grounds to doubt the data in a paper that is being subjected to a formal investigation of research misconduct.

Many retracted articles are still cited after the retraction has been issued (Bornemann-Cimenti, Szilagyi, and Sandner-Kiesling 2015; Pfeifer and Snodgrass 1990). Additionally, retractions might be issued following a misconduct investigation, but not completed by journals, that the original content is simply deleted, or that legal threats resulted in not retracting the work (Elia, Wager, and Tramèr 2014). If retractions do not occur even though they have been issued, their negative effect, for instance decreased author citations (Lu et al. 2013), are nullified, reducing the costs of committing misconduct.

1.4 Conclusion

This chapter provides an overview of the research practice spectrum, where on the one end there is responsible conduct of research and with research misconduct on the other end. In sum, transparent research practices are proposed to embody scientific norms and a way to deal with both questionable research practices and research misconduct, inducing better research practices. This would improve not only the documentation and verification of research results; it also helps create a more open environment for researchers to actively discuss ethical problems and handle problems in a responsible manner, promoting good research practices. This might help reduce both questionable research practices and research misconduct.

References

Allen, Mary, and Robin Dowell. 2013. “Retrospective Reflections of a Whistleblower: Opinions on Misconduct Responses.” Accountability in Research 20 (5-6): 339–48. doi:10.1080/08989621.2013.822249.

American Psychological Association. 2010a. “Ethical Principles of Psychologists and Code of Conduct.” http://www.apa.org/ethics/code/principles.pdf. http://www.apa.org/ethics/code/principles.pdf.

Anderson, Melissa S, Aaron S Horn, Kelly R Risbey, Emily A Ronning, Raymond De Vries, and Brian C Martinson. 2007. “What Do Mentoring and Training in the Responsible Conduct of Research Have to Do with Scientists’ Misbehavior? Findings from a National Survey of NIH-funded Scientists.” Academic Medicine 82 (9): 853. doi:10.1097/ACM.0b013e31812f764c.

Anderson, Melissa S, Brian C Martinson, and Raymond De Vries. 2007. “Normative Dissonance in Science: Results from a National Survey of U.s. Scientists.” Journal of Empirical Research on Human Research Ethics 2 (4): 3–14. doi:10.1525/jer.2007.2.4.3.

Anderson, Melissa S, Emily A Ronning, Raymond Devries, and Brian C Martinson. 2010. “Extending the Mertonian Norms: Scientists’ Subscription to Norms of Research.” The Journal of Higher Education 81 (3): 366–93. doi:10.1353/jhe.0.0095.

Armitage, P., C. K. McPherson, and B. C. Rowe. 1969. “Repeated Significance Tests on Accumulating Data.” Journal of the Royal Statistical Society. Series A (General) 132 (2). JSTOR: 235. doi:10.2307/2343787.

Bakker, Marjan, and Jelte M Wicherts. 2011. “The (Mis)reporting of Statistical Results in Psychology Journals.” Behavior Research Methods 43 (3): 666–78. doi:10.3758/s13428-011-0089-5.

Bik, Elisabeth M., Arturo Casadevall, and Ferric C. Fang. 2016a. “The Prevalence of Inappropriate Image Duplication in Biomedical Research Publications.” mBio 7 (3). American Society for Microbiology: e00809–16. doi:10.1128/mbio.00809-16.

Bornemann-Cimenti, Helmar, Istvan S Szilagyi, and Andreas Sandner-Kiesling. 2015. “Perpetuation of Retracted Publications Using the Example of the Scott S. Reuben Case: Incidences, Reasons and Possible Improvements.” Science and Engineering Ethics, 7~jul. doi:10.1007/s11948-015-9680-y.

Bornmann, Lutz, Irina Nast, and Hans-Dieter Daniel. 2008. “Do Editors and Referees Look for Signs of Scientific Misconduct When Reviewing Manuscripts? A Quantitative Content Analysis of Studies That Examined Review Criteria and Reasons for Accepting and Rejecting Manuscripts for Publication.” Scientometrics 77 (3). Springer Netherlands: 415–32. doi:10.1007/s11192-007-1950-2.

Buyse, M, S L George, S Evans, N L Geller, J Ranstam, B Scherrer, E Lesaffre, et al. 1999. “The Role of Biostatistics in the Prevention, Detection and Treatment of Fraud in Clinical Trials.” Statistics in Medicine 18 (24): 3435–51. doi:10.1002/(SICI)1097-0258(19991230)18:24<3435::AID-SIM365>3.0.CO;2-O.

Carlisle, J. B. 2012. “The Analysis of 168 Randomised Controlled Trials to Test Data Integrity.” Anaesthesia 67 (5): 521–37. doi:10.1111/j.1365-2044.2012.07128.x.

Carlisle, J. B., F. Dexter, J. J. Pandit, S. L. Shafer, and S. M. Yentis. 2015. “Calculating the Probability of Random Sampling for Continuous Variables in Submitted or Published Randomised Controlled Trials.” Anaesthesia 70 (7): 848–58. doi:10.1111/anae.13126.

Chambers, Christopher D. 2015. “Ten Reasons Why Journals Must Review Manuscripts Before Results Are Known.” Addiction 110 (1): 10–11. doi:10.1111/add.12728.

Cohen, Jacob. 1994. “The Earth Is Round (P < .05).” American Psychologist 49 (12): 997–1003. doi:10.1037/0003-066X.49.12.997.

Cressey, Daniel. 2013. “’Rehab’ Helps Errant Researchers Return to the Lab.” Nature News 493 (7431): 147. doi:10.1038/493147a.

Elia, Nadia, Elizabeth Wager, and Martin R. Tramèr. 2014. “Fate of Articles That Warranted Retraction Due to Ethical Concerns: A Descriptive Cross-Sectional Study.” Edited by K. BradEditor Wray. PLoS ONE 9 (1). Public Library of Science (PLoS): e85846. doi:10.1371/journal.pone.0085846.

Fanelli, Daniele. 2009. “How Many Scientists Fabricate and Falsify Research? A Systematic Review and Meta-Analysis of Survey Data.” PloS ONE 4 (5): e5738. doi:10.1371/journal.pone.0005738.

Fang, Ferric C, R Grant Steen, and Arturo Casadevall. 2012. “Misconduct Accounts for the Majority of Retracted Scientific Publications.” Proceedings of the National Academy of Sciences of the United States of America 109 (42): 17028–33. doi:10.1073/pnas.1212247109.

Franco, Annie, Neil Malhotra, and Gabor Simonovits. 2014. “Publication Bias in the Social Sciences: Unlocking the File Drawer.” Science 345 (6203): 1502–5. doi:10.1126/science.1255484.

Franco, Annie, Neil Malhotra, and Gabor Simonovits. 2016. “Underreporting in Psychology Experiments: Evidence from a Study Registry.” Social Psychological and Personality Science 7 (1): 8–12. doi:10.1177/1948550615598377.

Haldane, J B S. 1948. “The faking of genetical results.” Eureka 6: 21–28. http://wayback.archive.org/web/20170206144438/http://www.archim.org.uk/eureka/27/faking.html.

Hettinger, Thomas P. 2010. “Misconduct: Don’t Assume Science Is Self-Correcting.” Nature 466 (7310): 1040. doi:10.1038/4661040b.

John, Leslie K, George Loewenstein, and Drazen Prelec. 2012. “Measuring the prevalence of questionable research practices with incentives for truth telling.” Psychological Science 23 (5): 524–32. doi:10.1177/0956797611430953.

Kerr, Norbert L. 1998. “HARKing: Hypothesizing After the Results Are Known.” Personality and Social Psychology Review 2 (3). SAGE Publications: 196–217. doi:10.1207/s15327957pspr0203_4.

Klein, Richard A., Kate A Ratliff, Michelangelo Vianello, Reginald B Adams Jr., Štěpán Bahník, Michael J Bernstein, Konrad Bocian, et al. 2014. “Investigating Variation in Replicability.” Social Psychology 45 (3): 142–52. doi:10.1027/1864-9335/a000178.

Koppelman-White, Elysa. 2006. “Research Misconduct and the Scientific Process: Continuing Quality Improvement.” Accountability in Research 13 (3): 225–46. doi:10.1080/08989620600848611.

Kornfeld, Donald S. 2012. “Research Misconduct: The Search for a Remedy.” Academic Medicine 87 (7): 877–82. doi:10.1097/ACM.0b013e318257ee6a.

Krawczyk, Michal, and Ernesto Reuben. 2012. “(Un)available Upon Request: Field Experiment on Researchers’ Willingness to Share Supplementary Materials.” Accountability in Research 19 (3): 175–86. doi:10.1080/08989621.2012.678688.

Levelt Committee, Drenth Committee, and Noort, Committee. 2012. “Flawed Science: The Fraudulent Research Practices of Social Psychologist Diederik Stapel.” https://www.commissielevelt.nl/.

Lu, Susan Feng, Ginger Zhe Jin, Brian Uzzi, and Benjamin Jones. 2013. “The Retraction Penalty: Evidence from the Web of Science.” Scientific Reports 3 (6~nov): 3146. doi:10.1038/srep03146.

Lubalin, James S, and Jennifer L Matheson. 1999. “The Fallout: What Happens to Whistleblowers and Those Accused but Exonerated of Scientific Misconduct?” Science and Engineering Ethics 5 (2). Kluwer Academic Publishers: 229–50. doi:10.1007/s11948-999-0014-9.

Lubalin, James S, Mary-Anne E Ardini, and Jennifer L Matheson. 1995. “Consequences of Whistleblowing for the Whistleblower in Misconduct in Science Cases.” Research Triangle Institute. http://web.archive.org/web/20150819064509/https://ori.hhs.gov/sites/default/files/final.pdf.

Makel, Matthew C, Jonathan A Plucker, and Boyd Hegarty. 2012. “Replications in Psychology Research: How Often Do They Really Occur?” Perspectives on Psychological Science 7 (6): 537–42. doi:10.1177/1745691612460688.

Marcus, Adam, and Ivan Oransky. 2014. “What Studies of Retractions Tell Us.” Journal of Microbiology & Biology Education 15 (2): 151–54. doi:10.1128/jmbe.v15i2.855.

Margraf, Jürgen. 2015. “Zur Lage Der Psychologie.” Psychologische Rundschau; Ueberblick Uber Die Fortschritte Der Psychologie in Deutschland, Oesterreich, Und Der Schweiz 66 (1): 1–30. doi:10.1026/0033-3042/a000247.

Merton, Robert K. 1942. “A Note on Science and Democracy.” J. Legal & Pol. Soc. 1. HeinOnline: 115.

Mitroff, Ian I. 1974. “Norms and Counter-Norms in a Select Group of the Apollo Moon Scientists: A Case Study of the Ambivalence of Scientists.” American Sociological Review 39 (4). American Sociological Association: 579–95. doi:10.2307/2094423.

Mosimann, James E, Claire V Wiseman, and Ruth E Edelman. 1995. “Data Fabrication: Can People Generate Random Digits?” Accountability in Research 4 (1): 31–55. doi:10.1080/08989629508573866.

Mosimann, James, John Dahlberg, Nancy Davidian, and John Krueger. 2002. “Terminal Digits and the Examination of Questioned Data.” Accountability in Research 9 (2): 75–92. doi:10.1080/08989620212969.

National Academy of Sciences, National Academy of Engineering, and Institute of Medicine. 1992. Responsible Science, Volume I: Ensuring the Integrity of the Research Process. Washington, DC: The National Academies Press. doi:10.17226/1864.

Nosek, B A, G Alter, G C Banks, D Borsboom, S D Bowman, S J Breckler, S Buck, et al. 2015. “Promoting an Open Research Culture.” Science 348 (6242): 1422–5. doi:10.1126/science.aab2374.

Nosek, Brian A, and Yoav Bar-Anan. 2012. “Scientific Utopia: I. Opening Scientific Communication.” Psychological Inquiry 23 (3). Taylor & Francis: 217–43. doi:10.1080/1047840X.2012.692215.

Nosek, Brian A, Jeffrey R Spies, and Matt Motyl. 2012. “Scientific Utopia: II. Restructuring Incentives and Practices to Promote Truth over Publishability.” Perspectives on Psychological Science 7 (6): 615–31. doi:10.1177/1745691612459058.

Nuijten, Michèle B., Chris H. J. Hartgerink, Marcel A.L.M. Van Assen, Epskamp Sacha, and Jelte M. Wicherts. 2015. “The Prevalence of Statistical Reporting Errors in Psychology (1985–2013).” Behavior Research Methods 48 (4). Springer Nature: 1205–26. doi:10.3758/s13428-015-0664-2.

Office of Science and Technology Policy. 2000. “Federal Policy on Research Misconduct.” https://web.archive.org/web/20150910131244/https://www.federalregister.gov/articles/2000/12/06/00-30852/executive-office-of-the-president-federal-policy-on-research-misconduct-preamble-for-research#h-16.

Open Science Collaboration. 2015. “Estimating the Reproducibility of Psychological Science.” Science 349 (6251). doi:10.1126/science.aac4716.

Peeters, C A W, C A J Klaassen, and M A van de Wiel. 2015. “Meta-Response to Public Discussions of the Investigation into Publications by Dr. Förster.” University of Amsterdam. https://wayback.archive.org/web/20180709102400/https://www.uva.nl/binaries/content/assets/uva/nl/persvoorlichting/uva-nieuws/meta-response.pdf?1436179086511.

Peiffer, Ann M, Christina E Hugenschmidt, and Paul J Laurienti. 2011. “Ethics in 15 Min Per Week.” Science and Engineering Ethics 17 (2): 289–97. doi:10.1007/s11948-010-9197-3.

Pfeifer, M P, and G L Snodgrass. 1990. “The Continued Use of Retracted, Invalid Scientific Literature.” JAMA 263 (10): 1420–3. doi:10.1001/jama.1990.03440100140020.

Plemmons, Dena K, Suzanne A Brody, and Michael W Kalichman. 2006. “Student Perceptions of the Effectiveness of Education in the Responsible Conduct of Research.” Science and Engineering Ethics 12 (3): 571–82. doi:10.1007/s11948-006-0055-2.

Price, A R. 1998. “Anonymity and Pseudonymity in Whistleblowing to the U.s. Office of Research Integrity.” Academic Medicine 73 (5): 467–72. doi:10.1097/00001888-199805000-00009.

Resnik, David B, and C Neal Stewart Jr. 2012. “Misconduct Versus Honest Error and Scientific Disagreement.” Accountability in Research 19 (1): 56–63. doi:10.1080/08989621.2012.650948.

“Retraction of ‘the Secret Life of Emotions’ and ‘Emotion Elicitor or Emotion Messenger? Subliminal Priming Reveals Two Faces of Facial Expressions’.” 2012. Psychological Science 23 (7): 828–28. doi:10.1177/0956797612453137.

Rhoades, Lawrence J. 2004. “ORI Closed Investigations into Misconduct Allegations Involving Research Supported by the Public Health Service: 1994-2003.” Office of Research Integrity; ori.hhs.gov. https://wayback.archive.org/web/20180709101834/https://ori.hhs.gov/sites/default/files/Investigations1994-2003-2.pdf.

Rosenthal, Robert. 1979. “The File Drawer Problem and Tolerance for Null Results.” Psychological Bulletin 86 (3). American Psychological Association (APA): 638–41. doi:10.1037/0033-2909.86.3.638.

Rossner, Mike, and Kenneth M Yamada. 2004. “What’s in a Picture? The Temptation of Image Manipulation.” The Journal of Cell Biology 166 (1): 11–15. doi:10.1083/jcb.200406019.

Ruys, Kirsten I, and Diederik A Stapel. 2008. “Emotion Elicitor or Emotion Messenger?: Subliminal Priming Reveals Two Faces of Facial Expressions [Retracted].” Psychological Science 19 (6): 593–600. doi:10.1111/j.1467-9280.2008.02128.x.

Savage, Caroline J, and Andrew J Vickers. 2009. “Empirical Study of Data Sharing by Authors Publishing in PLoS Journals.” PloS ONE 4 (9): e7078. doi:10.1371/journal.pone.0007078.

Seife, Charles. 2015. “Research Misconduct Identified by the US Food and Drug Administration: Out of Sight, Out of Mind, Out of the Peer-Reviewed Literature.” JAMA Internal Medicine 175 (4): 567–77. doi:10.1001/jamainternmed.2014.7774.

Shamoo, A E, and D B Resnik. 2009. Responsible Conduct of Research. New York, NY: Oxford University Press.

Shamoo, Adil E. 2006. “Data Audit Would Reduce Unethical Behaviour.” Nature 439 (7078): 784. doi:10.1038/439784c.

Sijtsma, Klaas, Coosje L S Veldkamp, and Jelte M Wicherts. 2015. “Improving the Conduct and Reporting of Statistical Analysis in Psychology.” Psychometrika, 28~mar. doi:10.1007/s11336-015-9444-2.

Simmons, Joseph P, Leif D Nelson, and Uri Simonsohn. 2011. “False-Positive Psychology: Undisclosed Flexibility in Data Collection and Analysis Allows Presenting Anything as Significant.” Psychological Science 22 (11): 1359–66. doi:10.1177/0956797611417632.

Simonsohn, Uri. 2013. “Just Post It: The Lesson from Two Cases of Fabricated Data Detected by Statistics Alone.” Psychological Science 24 (10): 1875–88. doi:10.1177/0956797613480366.

Steneck, Nicholas H. 2006. “Fostering Integrity in Research: Definitions, Current Knowledge, and Future Directions.” Science and Engineering Ethics 12 (1). Springer Nature: 53–74. doi:10.1007/pl00022268.

Stewart, W W, and N Feder. 1987. “The Integrity of the Scientific Literature.” Nature 325 (6101): 207–14. doi:10.1038/325207a0.

Stroebe, Wolfgang, Tom Postmes, and Russell Spears. 2012. “Scientific Misconduct and the Myth of Self-Correction in Science.” Perspectives on Psychological Science 7 (6): 670–88. doi:10.1177/1745691612460687.

The Journal of Cell Biology. 2015a. “About the Journal.” https://web.archive.org/web/20150911132421/http://jcb.rupress.org/site/misc/about.xhtml.

Tversky, A, and D Kahneman. 1974. “Judgment Under Uncertainty: Heuristics and Biases.” Science 185 (4157): 1124–31. doi:10.1126/science.185.4157.1124.

Van Assen, Marcel A L M, Robbie C M Van Aert, Michèle B. Nuijten, and Jelte M Wicherts. 2014. “Why Publishing Everything Is More Effective Than Selective Publishing of Statistically Significant Results.” PloS ONE 9 (1): e84896. doi:10.1371/journal.pone.0084896.

Van Noorden, Richard. 2011. “Science Publishing: The Trouble with Retractions.” Nature 478 (7367): 26–28. doi:10.1038/478026a.

Veldkamp, Coosje L. S., Michèle B. Nuijten, Linda Dominguez-Alvarez, Marcel A. L. M. Van Assen, and Jelte M. Wicherts. 2014. “Statistical Reporting Errors and Collaboration on Statistical Analyses in Psychological Science.” PloS ONE 9 (12): e114876. doi:10.1371/journal.pone.0114876.

Wagenmakers, Eric-Jan, Ruud Wetzels, Denny Borsboom, Han L J van der Maas, and Rogier A Kievit. 2012. “An Agenda for Purely Confirmatory Research.” Perspectives on Psychological Science 7 (6): 632–38. doi:10.1177/1745691612463078.

Whitebeck, Caroline. 2001. “Group Mentoring to Foster the Responsible Conduct of Research.” Science and Engineering Ethics 7 (4). Kluwer Academic Publishers: 541–58. doi:10.1007/s11948-001-0012-z.

Wicherts, Jelte M. 2011. “Psychology Must Learn a Lesson from Fraud Case.” Nature 480 (7375): 7. doi:10.1038/480007a.

Wicherts, Jelte M, and Marcel A L M Van Assen. 2012. “Research Fraud: Speed up Reviews of Misconduct.” Nature 488 (7413): 591. doi:10.1038/488591b.

Wicherts, Jelte M., Denny Borsboom, Judith Kats, and Dylan Molenaar. 2006. “The Poor Availability of Psychological Research Data for Reanalysis.” American Psychologist 61 (7). American Psychological Association (APA): 726–28. doi:10.1037/0003-066x.61.7.726.

Wicherts, Jelte M., Chris H. J. Hartgerink, and Raoul P.P.P. Grasman. 2016. “The Growth of Psychology and Its Corrective Mechanisms: A Bibliometric Analysis (1950-2015).” Manuscript in preparation.

Wigboldus, Daniel H J, and Ron Dotsch. 2015. “Encourage Playing with Data and Discourage Questionable Reporting Practices.” Psychometrika, 28~mar. doi:10.1007/s11336-015-9445-1.